- Modelling Binary Data Collett Pdf Free Download

- Modelling Binary Data Collett Pdf Free Online

- Modelling Binary Data Collett Pdf Free Pdf

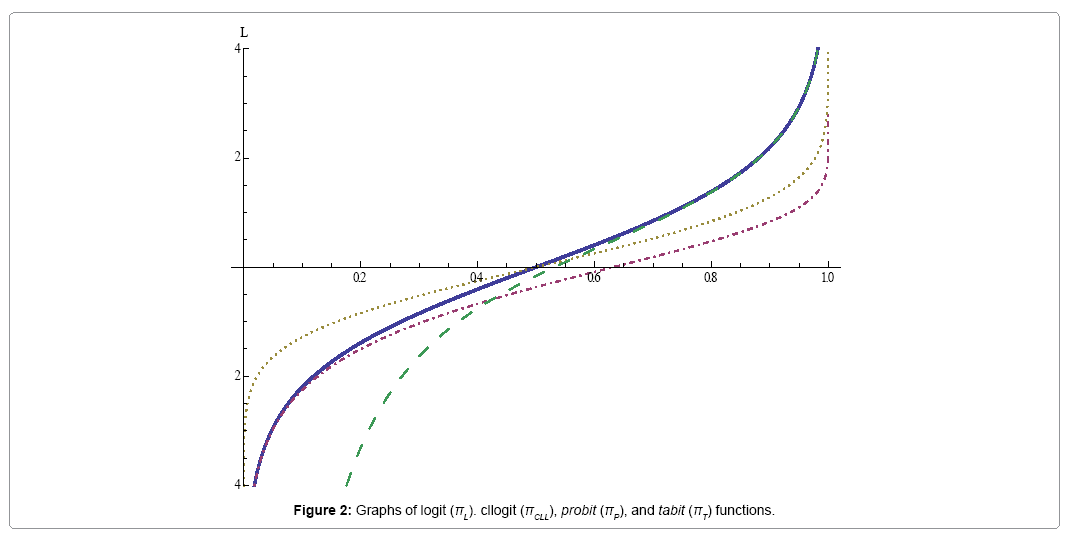

Modelling binary data pdf Model that incorporates hidden binary spin variables, and in principle, it microwave engineering pozar pdf download should be. Modelling binary data Binary Response Data - Logistic Regression Model. Principal component analysis PCA is a canonical and widely used method. Gurps 3rd edition pdf download. Binary Data Models for Binary Data Link Function Models for Binary Data In Part I we saw that Binomial data may be modelled by a glm, with the canonical logit link. This model is known as the logistic regression model and is the most popular for binary data. There are two other links commonly used in practice. Modelling Survival Data in Medical Research (Texts in Statistical Science) Now, more than ever, it provides an outstanding text for upper-level and graduate courses in survival analysis, biostatistics, and time-to-event analysis.The treatment begins with an introduction to survival analysis and a description of four studies.

- Modelling Binary Data, Second Edition: Chapman & Hall/CRC Texts in Statistical Science; As a former student of Prof. Collett I recall how clear his [PDF] The Saints In My Life: My Favorite Spiritual Companions.pdf Modelling binary data, second edition book| 0 Modelling Binary Data, Second Edition by D Collett starting at.

- Modelling Binary Data Chapman & Hall David Collett. Modelling Binary Data Chapman & Hall David Collett. With the increase in the amount of data View PDF MODELLING BINARY DATA Second Edition David Collett School of Applied Statistics The University of Reading, UK CHAPMAN & HALL/CRC A CRC Press Company Boca Raton London.

<ul><li><p>Modeling Binary Data. by D. CollettReview by: Potter C. ChangJournal of the American Statistical Association, Vol. 88, No. 422 (Jun., 1993), pp. 706-707Published by: American Statistical AssociationStable URL: http://www.jstor.org/stable/2290370 .Accessed: 15/06/2014 01:12</p><p>Your use of the JSTOR archive indicates your acceptance of the Terms & Conditions of Use, available at .http://www.jstor.org/page/info/about/policies/terms.jsp</p><p> .JSTOR is a not-for-profit service that helps scholars, researchers, and students discover, use, and build upon a wide range ofcontent in a trusted digital archive. We use information technology and tools to increase productivity and facilitate new formsof scholarship. For more information about JSTOR, please contact [email protected].</p><p> .</p><p>American Statistical Association is collaborating with JSTOR to digitize, preserve and extend access to Journalof the American Statistical Association.</p><p>http://www.jstor.org </p><p>This content downloaded from 195.78.108.60 on Sun, 15 Jun 2014 01:12:07 AMAll use subject to JSTOR Terms and Conditions</p><p>http://www.jstor.org/action/showPublisher?publisherCode=astatahttp://www.jstor.org/stable/2290370?origin=JSTOR-pdfhttp://www.jstor.org/page/info/about/policies/terms.jsphttp://www.jstor.org/page/info/about/policies/terms.jsp</p></li><li><p>706 Journal of the American Statistical Association, June 1993 </p><p>the ARIMA orders of the input processes (pi, qi, i = 1, . . ., p), the noise process (p, q), and the orders of the numerator and denominator components of the transfer functions (ri, h, = 1, . . ., p), as well as the pure delays (b, i = 1, . . ., p). My poor understanding of the various procedures by which one arrives at such specifications led me to restrict discussions in Shumway (1988) to a nonparametric approach to transfer function estimation in the frequency domain or to simple state-space or multivariate autoregressive approaches in the time domain. I am happy to report that the lucid discussion of the transfer function procedures in Pankratz's book has largely eliminated many of my misgivings about this methodology. Even though the discussion is on a practical level, one still can see the theoretical underpinnings well enough to understand what kinds of developments are needed to put the procedure on a relatively rigorous basis (see, for example, Brockwell, Davis, and Salehi 1990). </p><p>It is clear that what Box and Jenkins originally called model identification and what is sometimes called model selection is the most difficult part of the procedure. It is here that Pankratz makes a bold choice. Instead of choos- ing the cross-correlation function between the prewhitened input and trans- formed output process as the basic tool for identifying the transfer function structure, he chooses to use the multiple regression coefficients relating the lagged input processes to the output (see Liu and Hudak 1986). This ap- proach, known as the linear transfer function (LTF) method, seems to provide an improvement over the usual Box-Jenkins approach in that it works better when there are multiple inputs. The residuals are then used to build a simple ARMA model for the noise, after which one can reestimate the linear transfer function coefficients. </p><p>These coefficients are then used to identify a parsimonious ratio of poly- nomials that can approximate the transfer function of the LTF. This is perhaps the most difficult step in the identification procedure for the reader, and the book provides many examples and graphs that illustrate various common ratios used to describe transfer functions. The use of a corner table based on Pade approximations is introduced as an aid for choosing b, r, and h, in the rational polynomial model. In simple cases, a first-order poly- nomial in the denominator can emulate exponential decrease; combined with a pure delay, this often provides an economical description of the transfer function. Once a tentative identification has been made for the ratio of polynomials and for the ARMA form of the noise process, the input series x, can be analyzed to determine the best ARMA model for each. These kinds of analyses are assumed to be carried out using the SCA software (see Liu and Hudak 1986), which can also be used to estimate the parameters of the final model by conditional or exact maximum likelihood (least squares). The forecasts are computed as 'finite past' approximations to the 'infinite past' minimum mean square error estimators as in Box and Jenkins (1976). </p><p>Professor Pankratz provides examples that show this identification, esti- mation and forecasting sequence in action for a number of classical time series; examples are federal government receipts, electricity demand, housing sales and starts, and industrial production, stock prices and vendor perfor- mance. Tools for solving the identification problem are the autocorrelation function (ACF), partial autocorrelation (PACF), and the extended auto- correlation (EACF). More modern model selection techniques such as the Akaike information criterion (AIC) (see Brockwell and Davis 1991) are not applied except in the last chapter on multivariate ARMA processes. Pankratz tends to gravitate towards particular forms such as first-order multiplicative seasonal and ordinary autoregressive models for the noise and towards ex- ponentially decaying models with a delay for the rational polynomial. It would be interesting to see whether AIC would discriminate between these simple models and variations that might be suggested by the diagnostic tools. </p><p>From a theoretical viewpoint, it is important to note that the transfer function model has been formulated in state-space form by Brockwell and Davis (1991) and by Brockwell et al. (1990). This approach has strong appeal when the series are short or when some of the series may have missing values. In these cases the forecasting approximations Pankratz uses in this book may be very poor; furthermore, there is no discussion at all of the common case where some or all of the series may contain missing values (see Shumway 1988). The innovations form of the likelihood is still easy to compute in those cases, and one gets an exact treatment of the estimation problem using the Gaussian likelihood of y, x, . . . , xqp, t = 1, . . ., n. The Kalman filter also yields the exact linear predictor of y,+, instead of the large-sample approximation used in this book. </p><p>A rather unique feature of this book is its extended coverage of intervention analysis (Chaps. 7 and 8). Again, the author gives a detailed discussion of this procedure designed for modeling changes in time series as responses of rational polynomial systems to pulse or step interventions. The intervention analysis then becomes a special case of the preceding material where the inputs x, are known deterministic functions. Chapter 8 extends the discussion to outlier detection, where the outliers can be additive or innovational (vari- ance changing) in effect. </p><p>Any assessment of this book's overall importance will be tempered by one's personal view as to how the Box-Jenkins technology fits into modem time series analysis. Some will opt for the more general state-space model and, in particular, the additive structural models of the British School (see, for example, Harrison and Stevens 1976 and Harvey 1989) for applications in economics and the physical sciences. It is intuitively appealing to break series into their components and to identify and directly estimate factors such as seasonals and trends. Such models are appealing to statisticians because they are time series generalizations of random and fixed effect models already in common use. State-space models with multivariate ARMA com- ponents are also possible, and even the transfer function model can be put into state-space form (see, for example, Brockwell et al. 1990). </p><p>In general, Forecasting With Dynamic Regression Models fulfills its pur- pose admirably; in my opinion, it is the clearest and most readable exposition of the Box-Jenkins transfer function methodology currently available. I often recommend this book to graduate students from other fields as an excellent text for self-study. In conjunction with Pankratz (1982), it might serve best in an instructional setting as the second of a two-quarter sequence in time series analysis in a traditional MBA program. The level of mathematical sophistication required may be too high for undergraduates outside of the sciences. This stems not from any formal mathematical requirements that the book imposes, which are limited to simple algebra, but more from the level of sophistication required for understanding the practical implications of the rational polynomial transfer function model. </p><p>ROBERT H. SHUMWAY University of California, Davis </p><p>REFERENCES </p><p>Box, G. E. P., and Jenkins, G. M. (1976), '=?ime Series Analysis: Forecasting and Control, San Francisco: Holden-Day. </p><p>Brockwell, P. J., and Davis, R. A. (1991), Time Series: Theory and Methods (2nd ed.), New York: Springer-Verlag. </p><p>Brockwell, P. J., Davis, R. A., and Salehi, H. (1990), 'A State-Space Approach to Transfer Function Modelling,' in Inference From Stochastic Processes, eds. I. V. Basawa and N. U. Prabhu, New York: Marcel Dekker. </p><p>Harrison, P. J., and Stevens, C. F. (1976), 'Bayesian Forecasting' (with discussion), Journal of the Royal Statistical Society, Ser. B, 38, 205-247. </p><p>Harvey, A. C. (1989), Forecasting, Structural Time Series Models and the Kalman Filter, Cambridge, U.K.: Cambridge University Press. </p><p>Liu, L.-M., and Hudak, G. B. (1986), The SCA Statistical System, Reference Manual for Forecasting and Time Series Analysis, Version III, Lisle, IL: Scientific Computing Associates. </p><p>Pankratz, A. (I1982), Forecasting With Univariate Box-Jenkins Models: Concepts and Cases, New York: John Wiley. </p><p>Shumway, R. H. (1988), Applied Statistical Time Series Analysis, Englewood Cliffs, NJ: Prentice-Hall. </p><p>Tiao, G. C., and Box, G. E. P. (1981), 'Modeling Multiple Time Series With Appli- cations,' Journal of the American Statistical Association, 81, 228-237. </p><p>Modeling Binary Data. D. Collett. New York: Chapman and Hall, 1991. xiii + 369 pp. $85 (cloth); $39.95 (paper). </p><p>This is a valuable companion volume to Cox and Snell (1989). It requires a lower level of mathematical sophistication and covers many topics pertinent to application. The mathematical preparation required for this text is similar to that required for Dobson (1990). The focus of this text, on the other hand, is binary response and logistic model. Chapters 5, 6, 7, 8, and 9 are particularly interesting. Chapter 5 reviews topics on model diagnostics, in- cluding definitions of different types of residuals, illustrations of residual plots, examination of adequacy of the form of linear predictors, assessment of appropriateness of link functions, and detection of outliers. The discussion of influential observations is especially lucid. Chapter 6 discusses problems regarding overdispersion (e.g., variability in response probabilities, correlation between binary responses, and random effect). Chapter 7 is devoted to one of the most important applications of logistic model: data of epidemiological studies. The chapter examines the importance of controlling confounders and the meaning of 'adjusted odds ratio' (sec. 7.3) and clearly explains modeling data from case-control (sec. 7.6) and pair-matched case-control studies (sec. 7.7). Chapter 9 covers some advanced topics, including analysis of proportions when their denominators are not known, quasi-likelihood, cross-over studies, error in explanatory variables, multivariate binary data, and so on. The chapter consists of short notes that cover material in the literature as recent as 1991. The final chapter presents helpful introductions of some computer programs for logistic models, explaining, for instance, how qualitative explanatory variables are handled by GLIM, Genstat, SAS, BMDP, SPSS, and EGRET. The author is careful to indicate the versions </p><p>This content downloaded from 195.78.108.60 on Sun, 15 Jun 2014 01:12:07 AMAll use subject to JSTOR Terms and Conditions</p><p>http://www.jstor.org/page/info/about/policies/terms.jsp</p></li><li><p>Book Reviews 707 </p><p>of the packages he refers to. One special feature of this text is the many real- life examples. </p><p>POTTER C. CHANG University of California, Los Angeles </p><p>REFERENCES </p><p>Cox, D. R., and Snell, E. J. (1989), Analysis of Binary Data, (2nd ed.), New York: Chapman and Hall. </p><p>Dobson, A. J. (1990), An Introduction to Generalized Linear Models, </p><p>Item Response Theory: Parameter Estimation Techniques. Frank B. Baker. New York: Marcel Dekker, 1992. viii + 440 pp. $125. </p><p>One of the greatest services a researcher can perform for his colleagues is to gather information from various journal articles, conference papers, un- published manuscripts, and computer manuals and provide a lucid, in-depth summary in book form. This is by no means a simple task. Making such a synthesis readily available goes a long way towards advancing understanding and literacy. This is exactly what Baker has done for researchers in the area of item response theory (IRT), focusing on item and ability parameter es- timation. </p><p>As Baker points out, because of the computational demands of estimation procedures, IRT is not practical without the computer. But in our computer age, when complex algorithms and overwhelming computations can be done so easily and quickly, many people tend to analyze their data without fully understanding the appropriateness of the models they are using. This is especially true for IRT. Estimation programs such as PC BILOG (Mislevy and Bock 1986) and MULTILOG (Thissen 1986) let the practitioner obtain item and ability parameter estimates quite readily with little understanding of procedures used to fit the model to the data. One might even forget that these are indeed programs to produce estimates. Although it is not always necessary to write down the code that produced the results, it is essential to be able to interpret the results, both quantitatively and substantively. To do so, one needs a basic understanding of the statistical logic of the estimation paradigm and the underlying formulation. Here, too, Baker has done the IRT practitioners a great service. This book describes the most currently used unidimensional IRT models and furnishes detailed explanations of algorithms that can be used to estimate the parameters in each model. </p><p>All chapters but the introductory chapter focus on estimating item and/ or ability parameters in different IRT models, including the graded and nominal response models. Each chapter begins with an introduction that outlines the material to be covered, and each chapter's summary highlights the main points. Throughout the text the author presents interesting back- ground information from other nonmeasurement disciplines that have de- veloped related estimation techniques. The reader will find numerous ex- amples drawn from..</p></li></ul>

Abstract

Modelling Binary Data (Second Edition) Collett D (2003) ISBN 1584883243; 387 pages; £26.99, $59.95 CRC Press; http://www.crcpress.com/shopping cart/products/ product detail.asp?sku=C3243 It is now 13 years since the publication of the ï¬rst edition of Collettâs book. It has certainly been my standard text on binary data since that time, and the second edition has provided additional new topics that are very relevant to statisticians working in the pharmaceutical industry. For reviews of the ï¬rst edition, see Hand [1] and Harkness [2]. The ï¬rst six chapters of this second edition are pretty much unchanged from the ï¬rst, covering simple methods for the analysis of binary and binomial data through to the linear logistic model for binary data. Goodness of ï¬t and model checking also receive comprehensive coverage in these sections, and the important issue of overdispersion with binomial data is dealt with in detail. There are also sections on bioassay and binary data in epidemiology. Free capture software for streaming video. The new sections introduced in the second edition cover mixed models, exact methods and some brief notes within a section on additional topics, notably crossover trials and the analysis of ordered categorical data. This book is very well presented and will continue to be my recommended text for binary data. The clarity and depth â not too much mathematics, but just enough to give the understanding needed to apply these methods in practice â is ideal for pharmaceutical statisticians. My only criticisms concern some omissions, and areas where I would like to see greater coverage. Cross-over trials receive only brief mention, and in particular there is nothing on the use of mixed models in those areas; paired designs are not mentioned at all. Ordered categorical data do receive some attention, but there could be more. Data of this kind are used increasingly in our work, and further development here would be useful. This, however, could change the overall emphasis of the book; maybe it should be re titled âModelling Binary and Categorical Dataâ. Unordered categorical data are also not dealt with. Although we do not see such data very often, they do arise, particularly in diagnostics. Battle gear 2 ps2 iso maker free. The whole topic of diagnostics, to include sensitivity and speciï¬city, ROC curves and the kappa statistics, would also be a useful addition. In addition, and from a computer-software perspective, the emphasis from the ï¬rst edition on GLIM has gone, and now diï¬erent computing packages, including SAS and S-Plus, are discussed. Despite the minor shortcomings mentioned above, I would strongly recommend that you have a copy of this book: in my view it is the best around in this area of statistics. Richard Kay PAREXEL International

Modelling Binary Data Collett Pdf Free Download

Journal

Modelling Binary Data Collett Pdf Free Online

Pharmaceutical Statistics: the Journal of Applied Statistics in the Pharmaceutical Industry – Wiley

Modelling Binary Data Collett Pdf Free Pdf

Doraemon english comics pdf. Published: Jan 1, 2004